Discover how we fine-tuned Llama 2 to automate COVID-19 patient data analysis and accurately prescribe the right treatment and medication.

RAG Development Services We Offer

As a trusted RAG development company, we build retrieval-augmented generation systems that deliver accurate, grounded AI responses across your enterprise operations. From early-stage consulting to production-grade deployment, every RAG service we offer is designed to reduce hallucinations, improve answer quality, and integrate seamlessly with your existing data infrastructure.

RAG Consulting Services

Not sure where RAG fits in your AI roadmap? Our RAG consulting services assess your data assets, evaluate retrieval requirements, and identify high-ROI use cases for RAG implementation. We design a detailed architecture roadmap covering data sources, embedding strategy, retrieval approach, and LLM selection, aligned with your compliance requirements and long-term objectives.

Custom RAG Pipeline Development

We build custom retrieval-augmented generation pipelines tailored to your specific data types, query patterns, and accuracy requirements. Our engineers design end-to-end RAG pipelines covering document ingestion, intelligent chunking, embedding generation, vector indexing, retrieval logic, and LLM response synthesis. Each pipeline is optimized for precision, low latency, and production reliability under real enterprise workloads.

Vector Database Integration and Optimization

Choosing and configuring the right vector database is critical to RAG system performance. We integrate and optimize Pinecone, Weaviate, ChromaDB, Qdrant, Milvus, and pgvector based on your scale, latency requirements, and infrastructure preferences. Our team handles indexing strategy, similarity search tuning, metadata filtering, and hybrid search configuration to maximize retrieval accuracy across your knowledge base.

LLM Integration and Fine-tuning for RAG

Our LLM development services integrate GPT-4, Claude, LLaMA, Gemini, and Mistral into your RAG architecture, selecting the right model for your domain requirements and budget. We apply RAG-specific fine-tuning techniques to improve the model’s ability to synthesize retrieved context accurately, reduce hallucinations on domain-specific content, and maintain consistent response quality across diverse query types.

Agentic RAG Development

Need RAG systems that reason and act, not just retrieve? We build agentic RAG architectures where AI agents dynamically plan retrieval strategies, query multiple knowledge sources in sequence, validate retrieved information, and synthesize grounded responses. These systems handle complex multi-step queries, adapt retrieval logic based on initial results, and integrate with external tools and APIs for comprehensive enterprise automation.

RAG System Evaluation and Optimization

We design rigorous evaluation frameworks that measure your RAG system’s retrieval accuracy, answer faithfulness, and contextual relevance before production deployment. Our team benchmarks systems using metrics including precision at K, RAGAS score, and context recall, then optimizes chunking strategies, embedding models, retrieval parameters, and prompt templates to close any performance gaps identified in evaluation.

Multi-modal RAG Development

Your enterprise data extends beyond text. We build multi-modal RAG systems that retrieve and synthesize information from PDFs, images, tables, charts, spreadsheets, and structured databases within a unified pipeline. These systems support complex queries that require cross-modal reasoning, enabling users to ask questions that span multiple document types simultaneously.

RAG Integration with Enterprise Systems

We connect RAG systems to your existing enterprise infrastructure including CRM platforms, ERP systems, SharePoint, Confluence, Salesforce, and internal APIs. Our AI integration services handle secure authentication, real-time data synchronization, role-based access control, and middleware development to ensure seamless data flow between your RAG system and all connected business applications.

RAG Maintenance, Monitoring, and Support

RAG system performance degrades as your knowledge base evolves and query patterns shift. We provide ongoing maintenance covering knowledge base updates, embedding re-indexing, retrieval parameter retuning, and model upgrades. Our monitoring setup tracks retrieval latency, answer quality metrics, and user feedback signals to detect degradation early and maintain system accuracy in production.

AI Projects We’ve Developed

-

Fine-Tuning Llama 2 on COVID-19 Patient Data

-

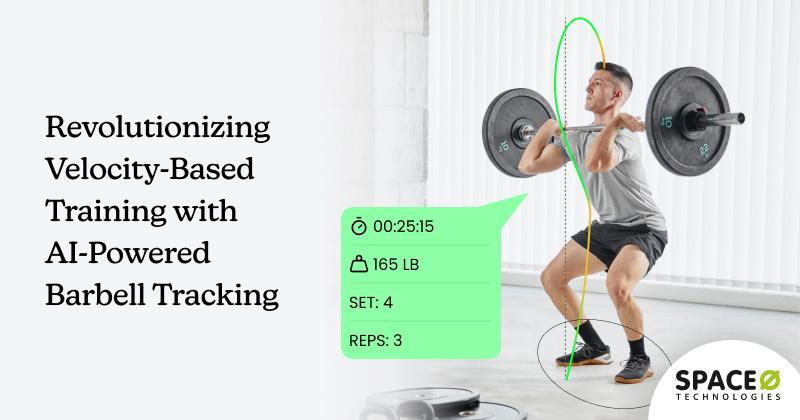

Revolutionizing Velocity-Based Training with AI-Powered Barbell Tracking

Discover how we developed an AI-powered barbell tracking app that revolutionizes velocity-based training with zero additional hardware requirements.

-

How We Built an AI-Powered Receptionist Enabling 24/7 Support and a 67% Reduction in Missed Inquiries

AI-powered receptionist development by Space-O Technologies using GPT-4o, React.js, Python, and Twilio. Contact us for your custom AI solution.

Client Testimonials

Project Summary

AI System Development for Christian Church

Space-O Technologies developed a private AI system for a Christian church. The team built a system capable of uploading research information, allowing other church workers to query information in a natural way.

View All →Project Summary

AI System Development for Gift Search Company

Space-O Technologies has developed an AI system for a gift search company. The team has built a recommendation engine, implemented dynamic pricing, and created tools for personalized marketing campaigns.

View All →Project Summary

AI System Development for Christian Church

Space-O Technologies developed a private AI system for a Christian church. The team built a system capable of uploading research information, allowing other church workers to query information in a natural way.

View All →Project Summary

POC Design & Dev for AI Technology Company

Space-O Technologies developed the POC of an AI product for life coaching conversations. Their work included wireframing, app design, engineering, and branding.

View All →Project Summary

Custom Mobile App Dev & Design for Software Company

Space-O Technologies was hired by a software firm to build a photo editing app that caters to restaurant owners. The team handled the development and design work, including the addition of AI-driven features.

View All →Types of RAG Solutions We Build

Every enterprise has different knowledge assets, query requirements, and compliance constraints. Our RAG developers specialize in building diverse categories of retrieval-augmented generation solutions, each optimized for specific business problems and data environments.

Enterprise Knowledge Base Systems

We build RAG-powered knowledge systems that give employees instant, accurate access to internal documentation, policies, procedures, and institutional knowledge through natural language queries. These systems replace inefficient keyword search with semantic retrieval, reducing time spent searching for information and ensuring consistent answers grounded in your authoritative source content.

Rag-powered Customer Support Chatbots

We develop intelligent customer support chatbots that retrieve answers directly from your product documentation, FAQs, and support history rather than generating generic responses. These systems handle complex, multi-turn customer queries with high accuracy, escalate appropriately when queries fall outside the knowledge base, and continuously improve from real support interactions over time.

Document Intelligence and QA Platforms

We build document intelligence platforms that enable analysts, researchers, and compliance teams to query large document repositories through natural language. These RAG systems process PDFs, contracts, reports, and regulatory filings, extract relevant passages with high precision, and synthesize comprehensive answers that cite specific source documents for traceability and audit compliance.

RAG Systems for Regulatory Compliance

We develop compliance-focused RAG systems that give legal, risk, and compliance teams accurate answers from regulatory frameworks, internal policies, and audit documentation. These systems are built with strict access controls, complete audit logging, and citation-level traceability, ensuring every response can be verified against its source for regulatory and legal requirements.

Multi-source Enterprise Search

We build unified enterprise search solutions that retrieve across multiple disconnected knowledge sources simultaneously, including SharePoint, Confluence, Salesforce, internal databases, and file storage systems. Users ask a single question and receive synthesized answers drawn from all relevant sources, eliminating the need to search each system separately and reducing information retrieval time significantly.

Agentic RAG for Workflow Automation

We develop agentic RAG systems where intelligent agents plan and execute multi-step retrieval workflows autonomously. These systems handle complex queries that require retrieving from multiple sources, validating retrieved information, performing reasoning steps, and synthesizing comprehensive responses. They integrate with business tools and APIs to trigger downstream actions based on retrieved knowledge.

Why Choose Space-O AI for RAG Development

Building accurate, production-grade RAG systems requires deep expertise in retrieval architecture, embedding optimization, and LLM integration. When you hire AI developers from Space-O AI, you are partnering with a team that has solved real retrieval challenges across enterprise deployments. Here is why organizations choose us as their RAG development partner.

15+ years of AI engineering Experience

We have been building AI systems since 2010, with deep expertise spanning machine learning, NLP, generative AI, and retrieval systems. Our RAG engineers bring hands-on experience across the full RAG stack, from data ingestion and chunking strategy through vector indexing, retrieval tuning, and production deployment, enabling faster implementation and more reliable outcomes on every engagement.

500+ Successful AI Projects Delivered

With over 500 AI projects delivered across diverse industries, we have encountered and solved the retrieval quality, latency, and accuracy challenges your project is likely to face. This experience translates into battle-tested architectural patterns, proven evaluation frameworks, and implementation decisions that reduce risk and accelerate time to production significantly.

80+ Certified RAG and AI Engineers

Our team includes certified AI engineers specializing in RAG architecture, LLM integration, vector database optimization, and agentic AI design. This depth of expertise ensures your project has access to specialized knowledge across every component of a production-grade RAG system, from embedding model selection through retrieval parameter tuning and production monitoring.

End-to-end RAG Ownership

From initial data assessment and architecture design through development, evaluation, deployment, and ongoing optimization, we own the complete RAG delivery lifecycle. We do not hand off at deployment. Your system continues to receive monitoring, retraining, and performance optimization as your knowledge base and query patterns evolve over time.

Enterprise-grade Security and Compliance

We build RAG systems with security embedded at every layer: data encryption at rest and in transit, role-based access controls, comprehensive audit logging, and compliance with GDPR, HIPAA, and SOC 2 requirements. Private deployment options ensure your proprietary data never leaves your infrastructure or reaches external model providers.

Transparent Development and Measurable Outcomes

We define evaluation benchmarks and success metrics before development begins, not after. Every RAG system we deliver is measured against retrieval precision, answer faithfulness, and latency benchmarks agreed upon during the discovery phase. Progress is tracked through weekly sprint reviews, transparent reporting, and real test results rather than qualitative impressions.

Technology Stack for RAG Development

Your RAG system performs only as well as the technologies powering it. We build retrieval-augmented generation systems using the most reliable, enterprise-proven tools across every layer of the RAG stack, from LLM selection through vector storage, orchestration, and production deployment.

Large Language Models

AI Frameworks & Orchestration

Machine Learning & Deep Learning

Natural Language Processing

Healthcare Interoperability

Cloud Platforms (HIPAA-Eligible)

Video & Communication

Vector Databases For RAG

Our RAG development process

Our development process follows a structured, iterative workflow that takes your RAG project from initial data assessment to production deployment, ensuring retrieval accuracy, seamless integration, and measurable performance at every stage.

Industries We Service

As a leading RAG development company, we build retrieval-augmented generation systems across diverse industries. We understand the data environments, compliance requirements, and retrieval challenges specific to each sector, and we build RAG systems that deliver measurable results in your domain.

Healthcare

Healthcare organizations manage vast volumes of clinical guidelines, patient records, research literature, and regulatory documentation that clinicians and administrators struggle to access quickly. We build HIPAA-compliant RAG systems that enable accurate retrieval from clinical knowledge bases, power patient-facing information tools, and support administrative teams with instant access to compliance documentation and internal protocols.

Finance

Financial institutions need RAG systems that retrieve accurately from earnings reports, regulatory filings, market research, and internal investment policy documentation. We develop RAG pipelines for financial services that support investment research automation, regulatory compliance querying, and financial advisory workflows, enabling analysts and compliance teams to access grounded, cited answers from large document repositories with high precision and full auditability.

Legal

Law firms and corporate legal departments manage contracts, case law, regulatory frameworks, and compliance documentation at a scale that makes manual retrieval inefficient and error-prone. We build legal RAG systems that retrieve from large contract repositories, case law databases, and regulatory document libraries with high semantic accuracy, enabling attorneys and compliance professionals to surface relevant precedents and obligations in seconds.

E-commerce

E-commerce organizations need RAG systems that give support agents, merchandising teams, and customers accurate answers from product catalogs, inventory systems, policy documentation, and supplier agreements. We build retail RAG solutions that retrieve from multi-source product knowledge bases, power intelligent customer support tools, and enable internal teams to query operational data through natural language across any channel.

Retail

Manufacturing organizations generate large volumes of technical documentation, maintenance manuals, quality standards, and process specifications that technicians and engineers need to access accurately in real time. We build RAG systems for manufacturing that retrieve from equipment manuals, quality standards, and production procedures, reducing downtime caused by slow information retrieval and enabling faster, more accurate field decision-making.

Education and Edtech

Education AI requires a partner that understands both the technical architecture and the compliance environment. We build HIPAA-compliant, SOC 2-certified RAG systems that process clinical documentation, medical literature, and institutional knowledge bases with the accuracy and auditability that patient safety and regulatory requirements demand.Our healthcare AI consulting team ensures every RAG system we deploy in clinical environments meets applicable data governance standards from day one.

Frequently Asked Questions About RAG Development

How is RAG different from a standard AI chatbot?

A standard AI chatbot generates responses from the model’s training data alone, which has a knowledge cutoff and no awareness of your proprietary business information. A RAG system retrieves from your specific knowledge base at inference time, ensuring responses are grounded in your current documentation and can cite the specific sources used to generate each answer. RAG systems are significantly more accurate for domain-specific queries, more trustworthy for compliance-sensitive applications, and more maintainable because you update the knowledge base rather than retrain the model when information changes.

How long does RAG development take?

Timeline depends on system complexity and scope. A proof-of-concept RAG system with a defined knowledge base and single integration point typically takes four to eight weeks. A production-grade RAG system with enterprise integrations, evaluation benchmarking, and deployment to production infrastructure typically takes three to five months. Enterprise multi-source RAG platforms with agentic retrieval, multi-modal support, and strict compliance requirements take six to twelve months. Contact our team for a timeline estimate based on your specific requirements and knowledge base characteristics.

What data sources can a RAG system retrieve from?

RAG systems can retrieve from virtually any data source that can be ingested and converted to text or embeddings. Common data sources include PDFs, Word documents, PowerPoint files, web pages, knowledge bases, SharePoint, Confluence, Notion, Salesforce, CRM databases, SQL and NoSQL databases, APIs, spreadsheets, and structured data files. Multi-modal RAG systems can also retrieve from images, charts, and tables embedded within documents. The key consideration is data access, quality, and preprocessing requirements, which vary significantly across source types and determine ingestion complexity.

How do you ensure retrieval accuracy in a RAG system?

We build retrieval accuracy through systematic evaluation at every stage of development. Before production deployment, we create evaluation datasets from real user queries, measure retrieval precision using RAGAS metrics and custom evaluation frameworks, test answer faithfulness against source documents, and benchmark latency under production load. We optimize chunking strategies, embedding model selection, retrieval parameters, and prompt templates based on evaluation results. We also set explicit accuracy thresholds that must be met before launch rather than deploying based on qualitative impressions of demo performance.

Can you build RAG systems that keep our data private?

Yes. We build private RAG deployments where all data processing, embedding generation, vector storage, and LLM inference happen within your infrastructure. Private deployments use self-hosted or VPC-deployed LLMs such as LLaMA or Azure OpenAI with private networking, self-managed vector databases, and no data transmission to external model providers. These architectures are fully GDPR, HIPAA, and SOC 2 compatible and appropriate for organizations with strict data residency requirements or proprietary data protection obligations that prohibit third-party data access.

Do you provide post-launch support for RAG systems?

Yes. We provide comprehensive post-launch support covering knowledge base updates and re-indexing, retrieval performance monitoring, periodic evaluation benchmarking, embedding model and retrieval parameter retuning, and model upgrades as newer, more capable LLMs become available. RAG systems require ongoing maintenance to remain accurate as your knowledge base evolves, and we treat post-launch optimization as a core part of the engagement rather than an optional add-on that requires a separate contract to activate.

How does RAG integrate with our existing enterprise systems?

What makes Space-O AI the right RAG development partner?

Space-O AI brings 15+ years of AI engineering experience, 500+ delivered AI projects, and a team of 80+ certified engineers with specific expertise in RAG architecture, LLM integration, and vector database optimization.

We define retrieval accuracy benchmarks before development starts, use rigorous evaluation frameworks before deployment, and provide ongoing support as your knowledge base evolves.

Our private deployment capabilities, enterprise compliance expertise, and transparent development process make us a reliable partner for organizations that need production-grade RAG systems with measurable, auditable performance that holds up under real enterprise use.

Amazon Chime

Amazon Chime