Discover how we developed an AI-powered barbell tracking app that revolutionizes velocity-based training with zero additional hardware requirements.

Enterprise LLM Deployment Services We Offer

We deploy private LLMs built for enterprise workloads — on your hardware, inside your network, under your control. Every deployment is engineered for production throughput, security, and compliance from day one.

On-Premises LLM Deployment

We deploy a production-grade LLM directly onto your own GPU servers. Our team handles model selection, inference engine configuration, hardware optimization, and API setup from start to finish. Your model runs entirely inside your building with no external dependencies — no third-party API calls, no data leaving your network.

Private Cloud LLM Hosting

Not every organization is ready for on-premises GPU hardware. We deploy your private LLM inside a dedicated sovereign cloud environment — isolated compute, private network, and full data residency guarantee. Your model runs in your cloud, under your account, with no shared tenancy or external data flows.

Air-Gapped LLM Deployment

For defense, intelligence, and the highest-security enterprise environments, we deploy LLMs in fully disconnected air-gapped networks. There is no internet connectivity and zero external data flows. The deployment meets classified information handling requirements and passes government security reviews.

Model Selection and Optimization

Not all open-weight models are equal, and picking the wrong one wastes time and budget. We benchmark candidate models against your real use cases: Llama 4 for large-context tasks, Mistral for GDPR-focused European deployments, Falcon 3 for multilingual needs, DeepSeek R1 for reasoning workloads. You get a data-backed model recommendation, not a guess.

Inference Engine Setup and Tuning

A poorly configured inference engine is why most internal private LLM attempts run too slowly. We configure and tune the right engine for your hardware: vLLM with PagedAttention for most enterprise deployments, TensorRT-LLM for peak NVIDIA GPU performance, TGI for HuggingFace ecosystem compatibility, and NVIDIA Triton Inference Server for multi-model serving. The result is consistent low-latency responses under real production load.

LLM API Gateway and Integration

We build the secure API layer that connects your deployed LLM to your enterprise applications. Authentication, rate limiting, usage logging, and multi-tenant access control are all configured at setup. Your teams call the model through a standard REST or gRPC interface, and every request is logged with a full audit trail.

LLM Security Hardening

We apply zero-trust security architecture to every deployment. That means encrypted data in transit and at rest, role-based access controls (RBAC), input validation against OWASP LLM Top 10 threats, network segmentation, and ongoing CVE monitoring for your model and inference stack. Security is not added at the end — it is built into the deployment from step one.

Quantization and Inference Optimization

Model quantization lets you run larger models on smaller hardware budgets — or get more requests per second from the GPU capacity you already have. We apply INT8, INT4, GPTQ, or AWQ quantization depending on your hardware profile and quality requirements. Quality loss is minimal. Throughput and cost savings are significant.

LLM Monitoring and MLOps Setup

A deployed LLM needs ongoing management to stay healthy in production. We set up request logging, latency tracking, throughput dashboards, drift detection, and alert systems. We also configure MLOps pipelines for model version management and scheduled updates — so your private LLM stays current without manual effort.

AI Projects We’ve Developed

-

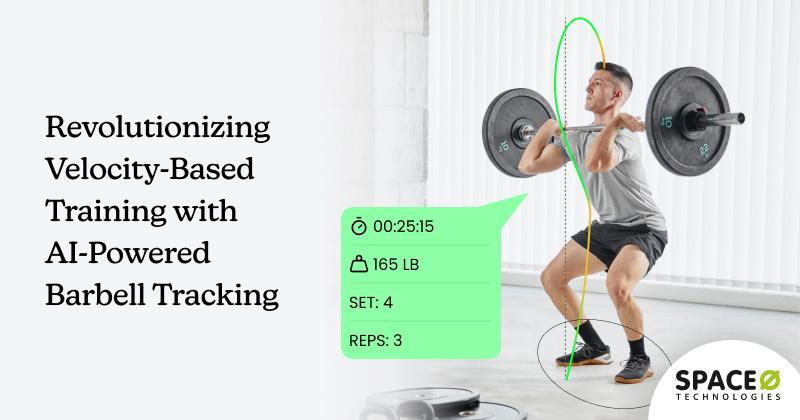

Revolutionizing Velocity-Based Training with AI-Powered Barbell Tracking

-

Fine-Tuning Llama 2 on COVID-19 Patient Data

Discover how we fine-tuned Llama 2 to automate COVID-19 patient data analysis and accurately prescribe the right treatment and medication.

-

WhatsApp-Based AI Chatbot Development for Quick Data Retrieval

Discover how a roofing company streamlined customer interactions with a WhatsApp-based AI chatbot. Learn how quick data retrieval improved efficiency and satisfaction

Client Testimonials

Project Summary

AI System Development for Christian Church

Space-O Technologies developed a private AI system for a Christian church. The team built a system capable of uploading research information, allowing other church workers to query information in a natural way.

View All →Project Summary

AI System Development for Gift Search Company

Space-O Technologies has developed an AI system for a gift search company. The team has built a recommendation engine, implemented dynamic pricing, and created tools for personalized marketing campaigns.

View All →Project Summary

AI System Development for Christian Church

Space-O Technologies developed a private AI system for a Christian church. The team built a system capable of uploading research information, allowing other church workers to query information in a natural way.

View All →Project Summary

POC Design & Dev for AI Technology Company

Space-O Technologies developed the POC of an AI product for life coaching conversations. Their work included wireframing, app design, engineering, and branding.

View All →Project Summary

Custom Mobile App Dev & Design for Software Company

Space-O Technologies was hired by a software firm to build a photo editing app that caters to restaurant owners. The team handled the development and design work, including the addition of AI-driven features.

View All →What You Get When We Deploy Your Enterprise LLM

Every LLM deployment engagement with Space-O includes a complete set of technical deliverables. You get a working system and full documentation — so your team can operate it confidently without depending on us.

Production-Ready LLM Inference Stack

A fully operational private LLM running inside your environment — configured, tested, and optimized for your throughput and latency requirements. This is not a proof of concept. It is ready for real workloads from day one.

Model Selection and Benchmarking Report

A detailed evaluation of candidate open-weight models tested against your actual use cases, data types, language requirements, and hardware. You get benchmark results, quality comparisons, and a clear recommendation with the reasoning behind it.

Inference Engine Configuration

A fully tuned inference engine — vLLM, TensorRT-LLM, TGI, or NVIDIA Triton — with optimized concurrency settings, KV cache configuration, batching strategy, and quantization applied for your specific hardware. This is what separates a fast production system from a slow internal experiment.

Secure API Gateway

A production-grade API layer connecting your applications to the deployed LLM. Authentication, authorization, rate limiting, usage logging, and multi-tenant access control are all built in. Standard REST and gRPC endpoints let your existing systems connect without custom integration work.

Security Hardening Documentation

A full record of every security control applied during deployment: encryption settings, RBAC policies, network segmentation design, OWASP LLM Top 10 mitigations, and penetration test results. Your CISO and security team get everything they need to review and sign off.

Compliance Configuration Package

Architecture diagrams, data flow maps, and compliance control documentation aligned to your specific regulations — GDPR, HIPAA, EU AI Act, or DPDP. Your legal team and Data Protection Officer receive a ready-to-review package, not a set of informal notes.

MLOps and Monitoring Setup

Dashboards, alert configurations, and automated pipeline scripts for request logging, latency monitoring, throughput tracking, and drift detection. Your operations team can see what is happening with the LLM at any time and get alerts before problems affect users.

Integration Guide and API Documentation

Complete technical documentation for your engineering teams — API reference, authentication setup, sample code, error handling patterns, and integration guides for your existing enterprise systems. New developers can get started without needing to call us.

Runbook and Operations Handbook

A step-by-step operations guide covering day-to-day management, patching procedures, capacity scaling, incident response, backup and recovery, and escalation contacts. Your internal team can run the system independently after handover.

Awards and Recognitions

Why Enterprises Choose Space-O for LLM Deployment

Production Deployments, Not Proofs of Concept

We build LLMs for real enterprise workloads — high concurrency, consistent low latency, production monitoring, and full operational documentation. If your current private LLM runs well in testing but breaks under real traffic, that is an inference configuration problem. We solve it.

Inference Engine Expertise Across All Major Frameworks

Our deployment engineers have hands-on experience with vLLM, TensorRT-LLM, TGI, and NVIDIA Triton Inference Server. We choose the right engine for your hardware and workload — not the one we default to for every client. Engine selection directly affects throughput, latency, and cost.

Hardware-Agnostic Deployment

We deploy on NVIDIA H100 and H200 clusters, AMD MI300X systems, co-located GPU servers, and sovereign cloud instances. We are not tied to one hardware vendor. If you already have GPU infrastructure, we work with it. If you need hardware, we advise on procurement and configuration.

Compliance Built Into the Deployment, Not Added After

GDPR, HIPAA, EU AI Act, and DPDP controls are configured during the deployment — not retroactively applied. Audit logging, data residency controls, access policies, and compliance documentation are all part of the standard engagement, not optional add-ons.

Zero Data Leaves Your Boundary

The entire deployment happens inside your environment, under your access controls. We do not ask your data to pass through Space-O infrastructure or any external service. Your data never leaves your boundary — not during deployment, not during testing, not during ongoing operations.

Open-Weight Models You Own Permanently

Every model we deploy is an open-weight model: Llama 4, Mistral, Falcon 3, DeepSeek R1, Gemma 2, Qwen 2.5. You own the model weights. There is no subscription, no API contract to renew, and no risk of the model being deprecated by a vendor. The model is yours, forever.

6 to 12 Weeks From Scoping to Production

Our deployment process moves from the first scoping call to a live production system in 6 to 12 weeks. That is faster than an internal build and without the risk of choosing the wrong architecture halfway through. Most clients are surprised at how quickly a well-planned deployment moves.

Inference Optimization That Delivers Real Throughput

We regularly achieve 14 to 24 times throughput improvement over baseline model serving through vLLM PagedAttention, model quantization, request batching optimization, and hardware-specific tuning. If your previous private LLM attempt was too slow, this is why — and it is a solvable problem.

90-Day Production Support Included

Post-deployment support is included in every engagement, not sold separately. The 90-day support period covers production stability, CVE patching, model updates, and direct access to the deployment team. An ongoing managed service option is also available if you want Space-O to continue managing the infrastructure.

How We Deploy Your Enterprise LLM

We follow a six-step deployment process that moves fast, keeps stakeholders informed, and produces a production-ready system — not just a working demo.

LLM Deployment Technology Stack

We deploy using the best open-source and open-weight tools available — no proprietary lock-in, no vendor dependency. Every tool in your stack is one your team can own and operate independently.

Open-Weight LLMs

Inference And Serving Frameworks

MLOps And Orchestration

Vector Databases (RAG)

Security And Compliance Tooling

Industries We Serve With Enterprise LLM Deployment

We deploy private LLMs for regulated industries where data cannot leave the organization’s controlled environment. Our sovereign AI development services and enterprise LLM deployment experience spans every major regulated sector.

Healthcare And Life Sciences

We deploy HIPAA-compliant private LLMs for clinical documentation, medical coding, patient record analysis, and clinical decision support. PHI stays on-premises. Every request is audit-logged. The stack integrates with your EHR system and meets HIPAA requirements for data handling and retention.

Government And Public Sector

On-premises and air-gapped LLM deployment for citizen services, document processing, policy analysis, and intelligence applications. We guarantee citizen data residency and use open-weight models that create no foreign vendor dependency. Deployments meet national security and procurement standards. This connects directly to our broader sovereign AI consulting services for government digital transformation.

Banking And Financial Services

Private LLM deployment for fraud detection, regulatory reporting, contract analysis, and internal knowledge management. We configure deployments to GDPR, DORA, and PCI-DSS standards. Sensitive financial data stays inside the bank’s infrastructure at every step.

Legal And Professional Services

Private LLM for contract review, legal research, document summarization, and due diligence. Attorney-client privilege is maintained — client data never leaves the firm’s environment. Every interaction is logged and access-controlled to the matter level.

Manufacturing And Industrial

On-premises LLM for technical documentation search, maintenance knowledge management, quality control reporting, and supply chain analysis. Operational data stays inside the facility network. The deployment works on standard enterprise hardware without specialized AI infrastructure knowledge on your side.

Technology And SaaS Companies

We integrate private LLMs into SaaS products where enterprise clients demand data sovereignty. Multi-tenant isolation ensures each enterprise customer’s data is separated. This lets you offer secure generative AI infrastructure as a product feature and win regulated-industry deals that competitors with cloud-only AI cannot close.

Insurance

Private LLM deployment for claims processing, underwriting support, policy document analysis, and fraud detection. Sensitive policyholder data stays inside the organization’s environment. Deployments are configured for SOC 2 and GDPR compliance depending on your jurisdiction.

Defense And Intelligence

Air-gapped LLM deployment for classified document analysis, intelligence processing, and decision support. Complete network isolation, physical security protocols, and classified information handling compliance. Deployments are designed to pass government security review on first submission.

Energy And Critical Infrastructure

Secure LLM deployment for technical knowledge management, maintenance support, and operational reporting. OT/IT boundary controls are configured from the start. Resilience-first architecture means the system stays operational even under network stress or partial infrastructure failure.

Frequently Asked Questions About Enterprise LLM Deployment

What is enterprise LLM deployment?

Enterprise LLM deployment means setting up a large language model inside your own infrastructure — your servers, your network, your control. Your teams and applications use the AI without sending data to third-party APIs like OpenAI or Anthropic. The model runs in your environment and your data never leaves it.

How long does it take to deploy a private LLM?

A rapid deployment takes 6 to 8 weeks. A full enterprise stack with integrations and compliance documentation takes 10 to 14 weeks. The biggest factors are how ready your infrastructure is and how many systems the LLM needs to connect to.

What does enterprise LLM deployment cost?

Rapid deployment engagements start from $50,000 to $80,000. Full enterprise stack engagements typically range from $100,000 to $250,000 depending on scope and integrations. Managed private LLM service starts from $8,000 per month. These are confirmed in your scoping call once we understand your specific requirements.

Do we need our own GPU hardware?

No. We can deploy on hardware you already have, help you procure and configure new GPU servers, or deploy inside a sovereign cloud environment. We scope the best option based on your budget, throughput needs, and compliance requirements.

What open-weight models do you deploy?

We deploy Llama 4, Mistral and Mixtral, Falcon 3, DeepSeek R1, Gemma 2, Qwen 2.5, and Command R+. The right model depends on your use case, language requirements, hardware, and compliance context. We run benchmarks and give you a data-backed recommendation before deployment begins.

Is a private LLM as capable as ChatGPT or Claude?

For specific enterprise tasks — document analysis, internal knowledge retrieval, structured data processing, coding assistance — a well-deployed private LLM with a RAG pipeline performs at the same level or better. General-purpose benchmarks favor frontier models, but enterprise use cases are specific. We match the model to the task, not the headline benchmark.

How do you handle compliance during deployment?

Compliance controls are part of the deployment, not an afterthought. We configure data residency, audit logging, RBAC access controls, and encryption aligned to your specific regulation — GDPR, HIPAA, EU AI Act, or DPDP. You receive a compliance documentation package your legal team and DPO can review before go-live.

What happens after the deployment is complete?

Every engagement includes a 90-day production support period. You also get full documentation: API docs, operations runbook, integration guides, and monitoring dashboards. Your internal team can run the system independently. If you want ongoing management, our Managed Private LLM service is available.