Discover how we developed an AI-powered barbell tracking app that revolutionizes velocity-based training with zero additional hardware requirements.

AI Infrastructure Engineering Services We Offer

We design and build the AI infrastructure stack that enterprise models, agents, and pipelines run on. GPU clusters, networking, storage, orchestration, and security are all engineered for production from the start, not retrofitted after a pilot that outgrew its original configuration.

GPU cluster design and configuration

We design your GPU cluster architecture from the ground up. This covers hardware selection across NVIDIA H100/H200/B200, AMD MI300X, DGX and HGX systems, NVLink and InfiniBand networking configuration, memory and storage sizing, and topology design for your specific AI workloads. Getting the cluster architecture right before procurement avoids costly CapEx mistakes that are very difficult to correct once hardware is on the floor.

AI Kubernetes platform setup

We build the Kubernetes platform your AI workloads run on: NVIDIA device plugin installation, GPU topology-aware scheduling, Multi-Instance GPU (MIG) configuration, KServe or Ray Serve model serving setup, and namespace isolation for multi-tenant workloads. The platform is tested under load before handover, not just installed and handed over untested. This is a production AI Kubernetes platform, not a lab configuration.

AI data pipeline architecture

We design and build the data ingestion, preprocessing, embedding, and retrieval pipelines that feed your AI models. Structured, unstructured, and real-time data sources are all handled within a single coherent pipeline architecture. Pipelines are built using Apache Airflow or Prefect, with RBAC applied at the retrieval layer to prevent unauthorized access to sensitive data.

Vector database and RAG infrastructure

We set up and configure the vector store that powers your retrieval-augmented generation workloads. We deploy Weaviate for flexible open-source configurations, Milvus for high-performance similarity search at scale, and pgvector for organizations already running PostgreSQL. Indexing strategies, reranking configurations, and metadata filtering are configured for production workloads, not demonstration data.

Storage architecture for AI workloads

We design the storage stack for your AI environment: NVMe flash arrays for fast model loading, MinIO S3-compatible object storage for model artifacts and training data, and Ceph distributed storage for large-scale data lakes. Storage I/O bottlenecks are one of the most common causes of underperforming AI infrastructure. A 70B parameter model loading from standard block storage can take four minutes or more; from properly configured NVMe storage, that drops to under 30 seconds. We eliminate the bottleneck before it affects production.

MLOps and model management platform

We build the MLOps infrastructure your team needs to manage AI models at production scale: MLflow for experiment tracking and model registry, Kubeflow for pipeline orchestration, DVC for dataset versioning, and Weights and Biases (self-hosted) for team collaboration. Model promotion, rollback, and update procedures are fully documented so your team can manage the model lifecycle without relying on external support for routine operations.

AI monitoring and observability stack

We configure production-grade monitoring for your entire AI infrastructure: GPU utilization, memory pressure, inference latency percentiles, token throughput, KV cache utilization, and request queuing. Prometheus and Grafana dashboards are built for LLM-specific workload metrics, not generic server health panels that miss the metrics that matter for AI workloads. Alert thresholds are set against your defined SLAs before the platform goes live.

Zero-trust security infrastructure

We apply zero-trust network architecture to your entire AI stack from the start of the build. This means micro-segmentation between inference, data, and management planes; HashiCorp Vault for secrets management; Open Policy Agent for policy enforcement; mTLS for internal service communication; and RBAC for all platform access. Security is applied at the infrastructure layer in the original build, not added as a retrofit after the platform is running.

AI infrastructure assessment and remediation

For organizations that have already procured hardware but are not getting the performance or compliance posture they expected, we assess the current GPU cluster configuration, identify bottlenecks in GPU scheduling, storage I/O, and network throughput, produce a prioritized remediation plan, and implement the fixes. You do not need to rebuild from scratch or write off hardware that is already procured.

AI Projects We’ve Developed

-

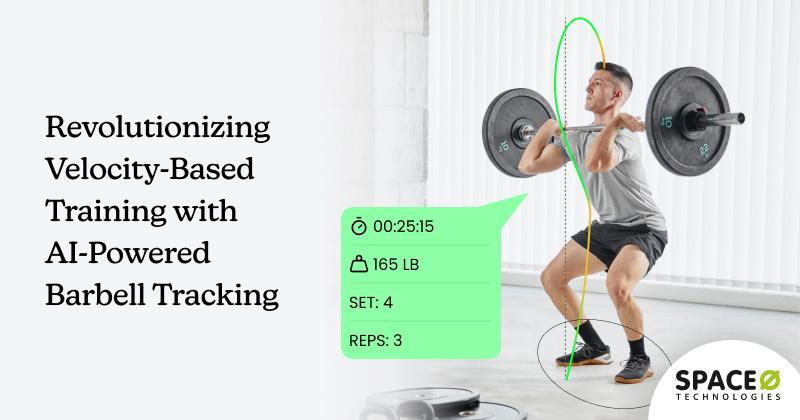

Revolutionizing Velocity-Based Training with AI-Powered Barbell Tracking

-

Fine-Tuning Llama 2 on COVID-19 Patient Data

Discover how we fine-tuned Llama 2 to automate COVID-19 patient data analysis and accurately prescribe the right treatment and medication.

-

WhatsApp-Based AI Chatbot Development for Quick Data Retrieval

Discover how a roofing company streamlined customer interactions with a WhatsApp-based AI chatbot. Learn how quick data retrieval improved efficiency and satisfaction

Client Testimonials

Project Summary

AI System Development for Christian Church

Space-O Technologies developed a private AI system for a Christian church. The team built a system capable of uploading research information, allowing other church workers to query information in a natural way.

View All →Project Summary

AI System Development for Gift Search Company

Space-O Technologies has developed an AI system for a gift search company. The team has built a recommendation engine, implemented dynamic pricing, and created tools for personalized marketing campaigns.

View All →Project Summary

AI System Development for Christian Church

Space-O Technologies developed a private AI system for a Christian church. The team built a system capable of uploading research information, allowing other church workers to query information in a natural way.

View All →Project Summary

POC Design & Dev for AI Technology Company

Space-O Technologies developed the POC of an AI product for life coaching conversations. Their work included wireframing, app design, engineering, and branding.

View All →Project Summary

Custom Mobile App Dev & Design for Software Company

Space-O Technologies was hired by a software firm to build a photo editing app that caters to restaurant owners. The team handled the development and design work, including the addition of AI-driven features.

View All →What You Get When We Build Your AI Infrastructure

Every infrastructure engagement with Space-O produces a specific, documented set of deliverables. Nothing is left undocumented at handover.

GPU cluster architecture document

A complete technical specification of your GPU infrastructure: hardware selection rationale, cluster topology, NVLink and InfiniBand networking design, storage configuration, and capacity planning for your defined workloads. This document serves as the long-term reference for your AI platform and the starting point for any future scaling decisions.

Configured Kubernetes AI platform

A production-ready Kubernetes cluster with NVIDIA device plugin, GPU topology-aware scheduling, MIG configuration where applicable, KServe or Ray Serve model serving, and namespace isolation for multi-tenant workloads. The platform is load-tested before handover. All Kubernetes configurations and manifests are included in the documentation package.

AI data pipeline implementation

Fully operational ingestion, preprocessing, embedding, and retrieval pipelines for your defined data sources: Apache Airflow or Prefect DAGs, vector store configured and populated with production-representative data, RBAC applied at the retrieval layer, and a complete pipeline runbook your team can operate and extend without external support.

MLOps platform

A deployed and configured MLOps stack: MLflow model registry, Kubeflow or Argo Workflows for pipeline orchestration, DVC for dataset versioning, and model promotion and rollback procedures documented in full. Your team has everything needed to manage AI models in production from day one.

Monitoring and observability dashboards

Prometheus and Grafana dashboards configured for LLM-specific workload metrics: GPU utilization, inference latency percentiles, token throughput, KV cache utilization, request queuing, and alert thresholds aligned to your defined SLAs. Your team knows when something is wrong before users experience the impact.

Security architecture documentation

A complete record of every security control applied to the infrastructure: zero-trust network design diagrams, RBAC policies, secrets management configuration, network segmentation maps, mTLS certificate setup, and OWASP LLM Top 10 mitigations applied at the inference layer. This document supports internal security review and external audit requirements.

Compliance configuration package

Architecture diagrams, data flow maps, and control documentation aligned to your applicable regulations. GDPR, HIPAA, EU AI Act, and DPDP compliance packages are produced as standard deliverables in every engagement, structured to go directly to your legal, compliance, and DPO teams for review.

Infrastructure operations runbook

A step-by-step guide covering day-to-day GPU cluster management, Kubernetes node maintenance, model storage procedures, capacity scaling, incident response, backup and recovery, and upgrade pathways. Your team has a clear reference document for every standard operational scenario.

Knowledge transfer and team training

Structured handover sessions covering Kubernetes GPU management, MLOps workflows, monitoring dashboards, and security procedures. The engagement is designed to transfer capability, not create dependency. Your internal team is fully capable of operating and extending the infrastructure after handover.

Awards and Recognitions

Why Enterprises Choose Space-O for AI Infrastructure Engineering

Production infrastructure, not lab setups

We build AI infrastructure designed for real enterprise workloads: high-throughput inference, multi-tenant Kubernetes clusters, NVMe storage for fast model loading, and monitoring stacks configured for LLM-specific metrics. Proof-of-concept configurations that pass testing but fail under production traffic are not what we deliver. Every platform is load-tested against your defined throughput targets before handover.

Deep GPU cluster expertise

We configure NVIDIA H100/H200/B200 and AMD MI300X clusters with the specificity that enterprise AI workloads demand: NVLink topology, InfiniBand fabric configuration, CUDA-aware networking, MIG partitioning, and GPU memory optimization for specific model sizes. Generic infrastructure administrators applying standard server patterns to GPU workloads consistently produce underperforming clusters.

Hardware-agnostic design

We design for NVIDIA DGX systems, HGX server nodes, AMD MI300X platforms, and co-located GPU hardware. No hardware vendor lock-in, and no forced upgrades. We work with your existing hardware or scope the right procurement for your workload without preference for any particular vendor.

CapEx economics built in

On-premises AI infrastructure typically recovers its cost versus cloud AI rental in approximately 10 to 15 months of continuous use at enterprise scale. For workloads running 24/7 at high GPU utilization, ownership is significantly cheaper long-term than renting cloud GPU capacity.

Zero-trust security from day one

Security is applied at the infrastructure layer, not added after go-live. Zero-trust network architecture, micro-segmentation, RBAC, secrets management, and compliance documentation are all part of the build engagement. Retrofitting security onto a running AI infrastructure platform is significantly harder than designing it in from the start. We design it in from the start.

Full knowledge transfer guaranteed

We do not build infrastructure that your team cannot operate. Every configuration is documented, every runbook is written, and every handover includes structured training sessions. The engagement ends with your team owning the platform. This is a commitment, not a general intention.

Compliance documentation as a deliverable

Architecture diagrams, data flow maps, and control documentation aligned to your specific regulations are produced as standard deliverables in every engagement. Your legal and compliance teams have what they need to approve AI workloads from day one. Compliance review cycles that run months without documentation stall AI programs entirely. Documentation is never absent with Space-O.

Infrastructure assessment for existing hardware

For organizations that have already procured GPU hardware but are not getting expected performance or compliance posture, we assess, diagnose, and fix without requiring a rebuild. Our infrastructure assessment engagement covers performance auditing, configuration review, bottleneck diagnosis, and compliance gap analysis, followed by a prioritized remediation plan and implementation.

End-to-end sovereign AI delivery

AI infrastructure engineering is one layer of the sovereign AI stack. Clients who build their AI infrastructure with Space-O have a direct path to enterprise LLM deployment services on the same platform. For organizations that want strategic guidance before or alongside the build, our sovereign AI consulting services run in parallel with the infrastructure engagement.

How We Build Your AI Infrastructure

We follow a six-step deployment process that moves fast, keeps stakeholders informed, and produces a production-ready system — not just a working demo.

AI Infrastructure Technology Stack

We deploy using the best open-source and open-weight tools available — no proprietary lock-in, no vendor dependency. Every tool in your stack is one your team can own and operate independently.

Open-Weight LLMs

Inference And Serving Frameworks

MLOps And Orchestration

Vector Databases (RAG)

Security And Compliance Tooling

Industries We Serve with AI Infrastructure Engineering

We deploy private LLMs for regulated industries where data cannot leave the organization’s controlled environment. Our sovereign AI development services and enterprise LLM deployment experience spans every major regulated sector.

Healthcare And Life Sciences

We build HIPAA-compliant AI infrastructure for clinical AI workloads. All protected health information stays on-premises, with RBAC applied at the data pipeline and retrieval layer and a full audit trail for every AI operation. Infrastructure is reviewed and approved for HIPAA compliance before any sensitive data is ingested, removing the compliance review bottleneck that typically delays healthcare AI programs by months.

Government And Public Sector

We build on-premises and air-gapped AI infrastructure for government AI programs: citizen data residency guaranteed, an open-source software stack with no foreign vendor dependency, and compliance documentation aligned to government security classification requirements. Air-gapped configurations are available for the highest-sensitivity workloads.

Banking And Financial Services

We build GPU cluster and AI data pipeline infrastructure for a sector where data residency and inference latency are both critical. Zero-trust architecture, GDPR and DORA compliance documentation, and sub-200ms inference latency SLAs are designed into the infrastructure from the start, not added during a post-build compliance review.

Legal And Professional Services

We build private AI infrastructure for law firms and professional services organizations where attorney-client privilege and data confidentiality are non-negotiable requirements. All compute, storage, and data processing occurs within the firm’s controlled environment. Client data never traverses any network or system outside firm ownership.

Manufacturing And Industrial

We build on-premises AI infrastructure for operational technology (OT) environments, with OT/IT boundary controls designed into the architecture from the start. Air-gapped configurations are available for facilities where external connectivity is prohibited by operational or regulatory requirements. Low-latency inference is designed in for real-time manufacturing applications.

Technology And SaaS Companies

We build multi-tenant AI infrastructure for SaaS platforms that need to offer private AI as a product feature. Tenant data isolation is designed in from the start, with customer-specific data residency options and horizontal scaling capability as the enterprise client base grows. This infrastructure layer enables sovereign AI development services to be delivered as a product offering to enterprise end customers.

Insurance

We build AI infrastructure for the compliance requirements and data volumes that insurance operations generate: SOC 2 and GDPR-aligned design, high-throughput data pipelines for claims processing, underwriting workflows, and fraud detection, and monitoring stacks built for the latency and accuracy SLAs of insurance AI applications.

Defense And Intelligence

We build air-gapped GPU cluster infrastructure for classified AI workloads. Complete network isolation, physical security protocols integrated into the design, and compliance with classified information handling requirements are all included. No internet-connected components are present in any configuration serving classified data.

Energy And Critical Infrastructure

We build secure AI infrastructure for energy operators: OT/IT boundary controls, resilience-first design with redundant compute and storage, and sector-specific regulatory compliance documentation. High-availability configurations are standard for critical infrastructure environments where infrastructure downtime has direct operational consequences.

Frequently Asked Questions About AI Infrastructure Engineering

What is AI infrastructure engineering?

AI infrastructure engineering is the design, build, and configuration of the hardware and software stack that enterprise AI models run on. This includes GPU clusters, high-speed networking, storage systems, Kubernetes orchestration, data ingestion and retrieval pipelines, MLOps platforms, monitoring, and security controls. It is the foundation layer. Without it, no AI model can run reliably at production scale inside your own environment.

Do we need our own GPU hardware?

Not necessarily to start. We can build around hardware you already own, advise on the right GPU hardware for your specific workload and budget, or design infrastructure in a sovereign cloud environment where the hardware is provided by a compliant hosting partner. We scope the best option based on your workload volume, compliance requirements, and CapEx/OpEx preferences.

What GPU hardware do you work with?

We design and configure infrastructure using NVIDIA H100, H200, and B200 SXM GPUs, AMD MI300X, NVIDIA DGX H100/H200 systems, and HGX H100/H200 server nodes. Our infrastructure design is hardware-agnostic. We work with what makes the most sense for your workload and budget, without preference for any particular hardware vendor.

How long does it take to build AI infrastructure?

An infrastructure assessment takes two to three weeks. A full infrastructure build covering GPU cluster configuration, Kubernetes platform, data pipeline, MLOps, and security typically takes 10 to 16 weeks depending on scope and hardware lead times. Large GPU hardware orders can take 8 to 16 weeks to arrive after procurement approval. Planning hardware procurement early is critical to keeping the overall build timeline on track.

What is the ROI on building private AI infrastructure?

On-premises GPU infrastructure typically reaches cost break-even versus cloud AI rental in 10 to 15 months of continuous use at enterprise scale. For workloads running 24/7 at high utilization, ownership costs significantly less long-term than renting cloud GPU capacity. In many regulated industries, data residency requirements mean building private infrastructure is a compliance obligation regardless of the cost comparison. We build the financial model with you as part of the scoping process.

Can you work with infrastructure our team has already set up?

Yes. Our infrastructure assessment engagement is designed for organizations that have set up GPU infrastructure but are not getting the performance or compliance posture they expected. We assess the current configuration, identify what is causing the problem, produce a remediation plan, and implement the fixes. You do not need to rebuild from scratch or write off hardware you have already procured.

How do you handle security and compliance?

Zero-trust network architecture, RBAC, secrets management, mTLS, and network segmentation are applied as part of the build, not as a separate engagement afterward. We produce compliance documentation aligned to your applicable regulations (GDPR, HIPAA, EU AI Act, DPDP) as a standard deliverable in every engagement. This documentation is structured to be handed directly to your legal, compliance, and DPO review teams, formatted for their review process rather than for an engineering audience.